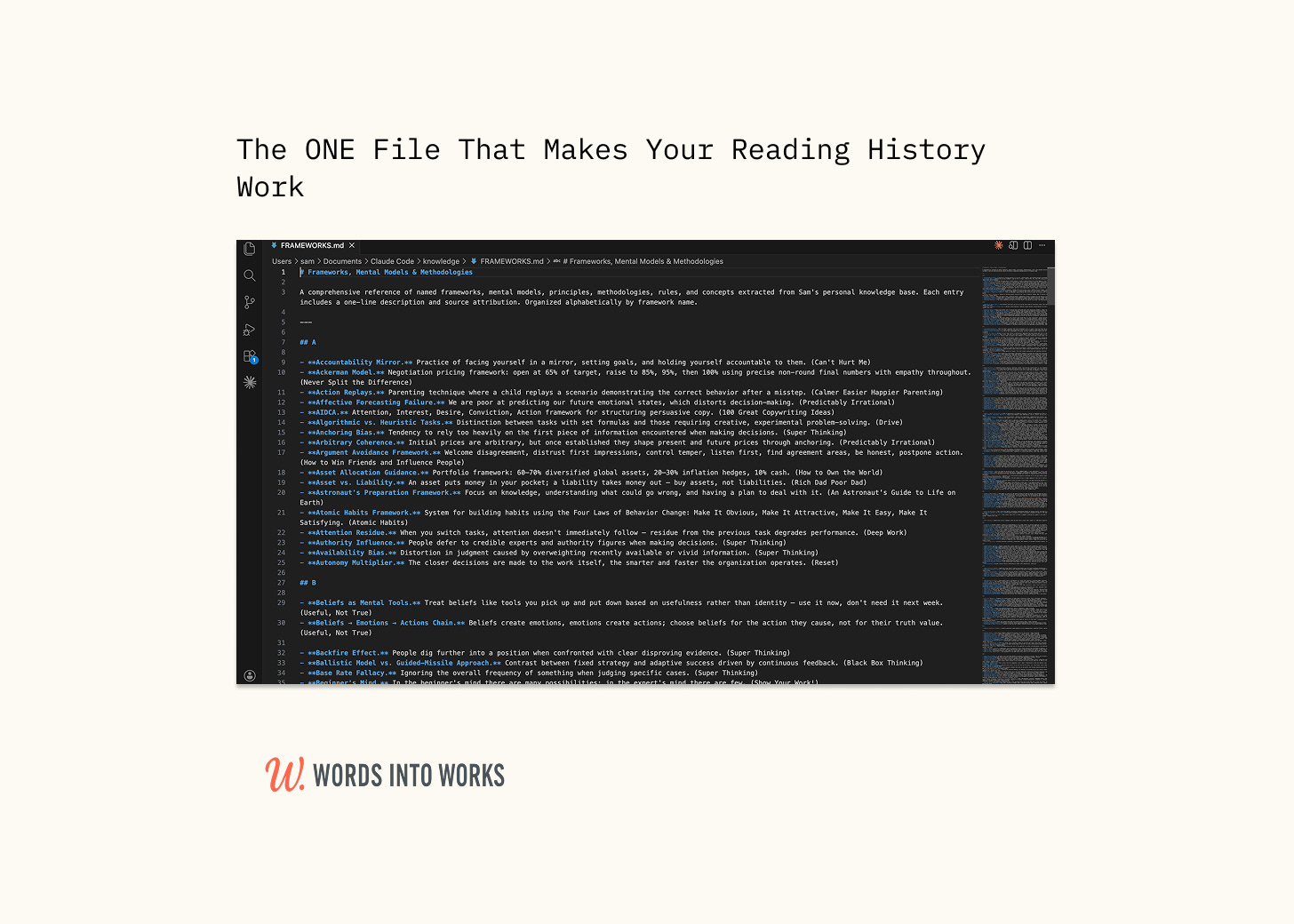

The File That Makes Your Reading History Work

How to Build the Extraction Layer That Turns Book Summaries Into Knowledge Claude Reasons From

This is Part 3 of the three-part Claude Second Brain series. If you’re a new reader, catch up on Part 1 and Part 2.

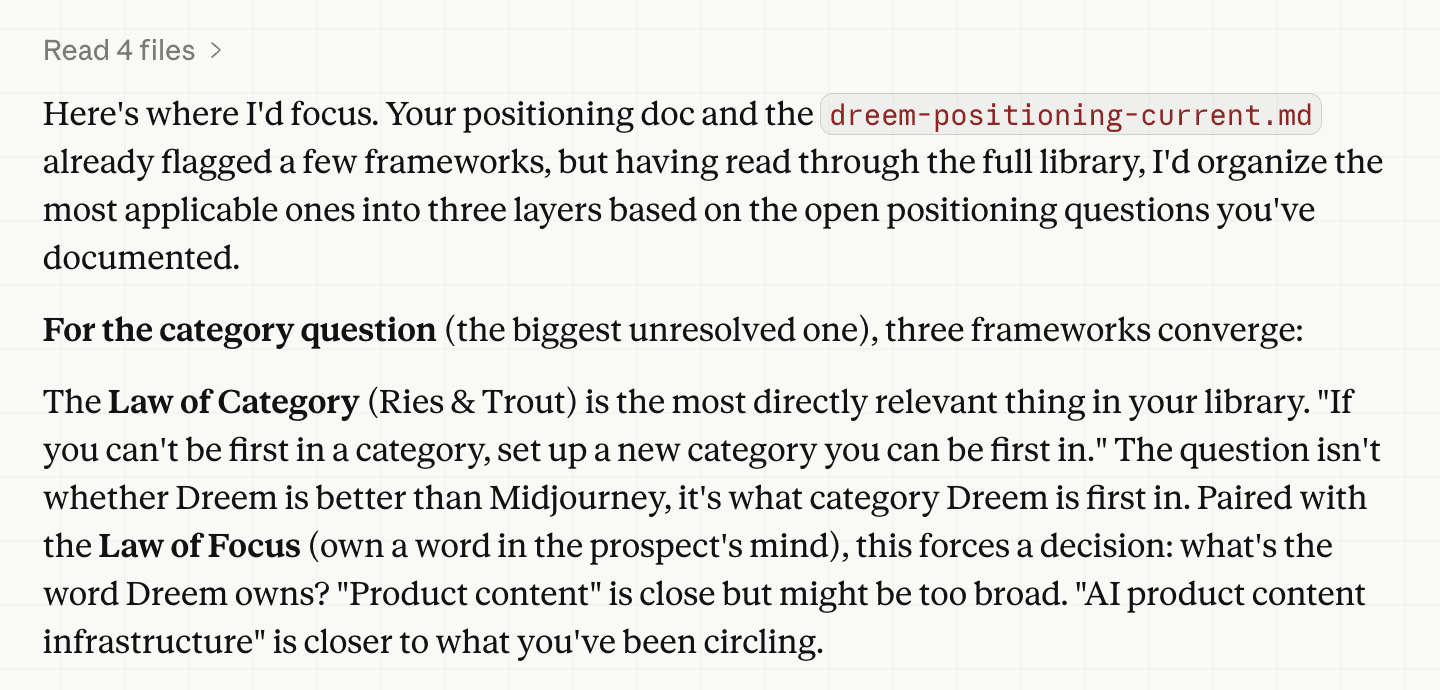

Recently, I asked Claude to help me think through a positioning issue I was working on for a feature launch.

Rather than giving me generic advice, it surfaced three frameworks directly relevant to how I was thinking about the problem—the Law of Category, the New Opportunity vs. Improvement Offer distinction, and the Epiphany Bridge—pulled from a file called FRAMEWORKS.md, a distillation of everything I’ve added to my Second Brain.

Inside, 330 named mental models, one line each, alphabetized and sourced from everything I’ve ever thought I might reference one day. I hadn’t told it which ones to use. It already knew.

That’s the part most people skip. They give Claude their raw summaries and assume it’ll figure out what’s relevant. It won’t, or at least, not well. After all, a folder of 40 markdown files isn’t a knowledge base; it’s a pile. So, Claude ends up wading through 40 pages of notes to answer a question that should take seconds.

Asking Claude to build FRAMEWORKS.md—and BENCHMARKS.md if you want to go further—is the extraction layer that turns your reading history into something Claude can reason from.

In this issue, I’ll show you how to build it.

The Prompt That Does the Work

Point Claude at your book summaries folder and ask it to extract.

Here’s the prompt I used:

I have a folder of book summaries at `knowledge/book-summaries/`. Each file is a markdown summary of a book I've read. Read through all of them and build two files:

**FRAMEWORKS.md** — every named mental model, framework, principle, or methodology you find. One line each. Format: **Name.** Description. (Source)

**BENCHMARKS.md** — every specific number, statistic, percentage, or quantified finding. Organized by domain. Format: **Finding.** Context. *Source*

Alphabetize FRAMEWORKS by framework name. Group BENCHMARKS by domain (Writing, Decision-Making, Finance, etc.). Save both files to `knowledge/`.

Here’s a sample of what came out when I ran it against five books I’d never extracted from—Reset, Same As Ever, The Art of Spending Money, Useful, Not True, and The Wealth Ladder:

Happiness = Expectations Gap. Satisfaction comes from wants growing slower than results — not from objective conditions. (Same As Ever)

Hedonic Adaptation (Spending). You get used to luxury purchases within months and need escalating upgrades — the hedonic treadmill never stops unless consciously stepped off. (The Art of Spending Money)

Leverage Points. Critical spots within a system where small focused interventions produce disproportionately large positive results. (Reset)

Useful Beliefs (Not True). Some beliefs aren’t objectively true but are useful to hold — “everything happens for a reason” helps people survive trauma even if false. (Useful, Not True)

Wealth Ladder Framework. Six-tier system organizing wealth from under $10k to over $100 million, where each level represents a 10x jump in net worth and requires fundamentally different financial strategies. (The Wealth Ladder)

Forty entries from five books. One pass. Twenty minutes.

Once the files exist, add this to your CLAUDE.md—the config file that tells Claude what to load and how to behave at the start of every session:

## Knowledge Base

At the start of every session, load:

- `knowledge/FRAMEWORKS.md` — named mental models from my reading

- `knowledge/BENCHMARKS.md` — specific statistics and quantified findings

Use these actively. When a framework or benchmark is relevant, surface it without being asked.

That last line is the one most people miss. Without it, FRAMEWORKS.md is a filing cabinet. With it, it’s a thinking partner. “Surface it without being asked” is what makes the difference.

For large libraries (100+ book summaries), run in batches of 20–30 and merge the outputs afterward, and Claude handles that range cleanly in one pass.

The Library That Reads Back

Your knowledge base doesn’t get smarter when you read more. It gets smarter when you extract better.

Think back to my positioning question at the start of this issue. Three weeks before I built FRAMEWORKS.md, I would have had to search my own notes, remember which book had the relevant framework, dig it out, and explain it to Claude before it could use it. Instead I asked a question, and the answer came from my own reading history without any of that.

That’s the shift. Not in what Claude can do, but in what it can do with what you already know.

Once it’s running, ask Claude: “Based on everything in my knowledge base, what’s missing from how I think about this?” I ran that prompt after building mine. The gaps it surfaced weren’t things I didn’t know. Rather, they were things I’d read but never connected.

If you enjoyed this read, the best compliment I could receive would be if you shared it with one person or restacked it.